A brain-computer interface (BCI) has enabled a paralyzed woman who lost her ability to speak after suffering a brainstem stroke to communicate through a digital avatar.

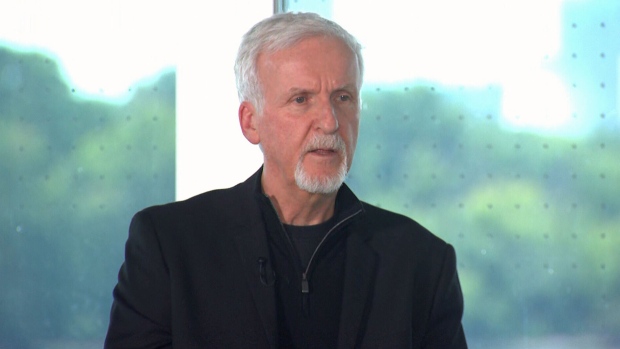

The achievement by a team of researchers at University of California San Francisco (UCSF) and UC Berkeley marks the first time that either speech or facial expressions have been synthesized from brain signals. “Our goal in incorporating audible speech with a live-action avatar is to allow for the full embodiment of human speech communication, which is so much more than just words,” Edward Chang, MD, chair of neurological surgery at UCSF, who has worked on the technology for more than a decade, explains in a UCSF video posted on YouTube.

“For us, this is an exciting new milestone that moves our device beyond proof of concept, and we think it will soon become a viable option for people who are paralyzed,” Chang predicted.

The research was published online August 23 in Nature.

Beyond Text on a Screen

In an earlier study, Chang’s team showed that it’s possible to record neural activity from a paralyzed person who is attempting to speak and translate that activity into words and sentences as text on the screen, as reported previously by Medscape Medical News.

Their new work demonstrates something much more ambitious: decoding brain signals into the richness of speech along with the movements that animate a person’s face during conversation.

“In this new study, our translation of attempted speech into text reach about 78 words per minute. We also show that it’s possible to translate the neural signals not only to text on a screen, but also directly into audible synthetic speech, with accurate facial movement on an avatar,” Chang says.

The team implanted a paper-thin rectangle of 253 electrodes onto the surface of the woman’s brain over areas critical for speech.

The electrodes intercept brain signals that, if not for the stroke, would have gone to muscles in her tongue, jaw and larynx, and face. A cable, plugged into a port fixed to her head, connected the electrodes to a bank of computers.

The researchers trained and evaluated deep-learning models using neural data collected as the woman attempted to silently speak sentences.

For weeks, she repeated different phrases from a 1024-word conversational vocabulary over and over again, until the computer recognized the brain activity patterns associated with the sounds.

“This device reads the blueprint of instructions the brain is using to give to the muscles in the vocal tract,” Chang says.

To create the avatar voice, the team developed an algorithm for synthesizing speech, and they used a recording of the woman’s voice before the injury to make the avatar sound like her. To create the avatar — essentially a digital animation of the woman’s face — the team used a software system that simulates and animates muscle movements of the face.

They created customized machine-learning processes to allow the software to mesh with signals being sent from the woman’s brain as she was trying to speak and convert them into the movements on the avatar’s face, making the jaw open and close, the lips protrude and purse, and the tongue go up and down, as well as the facial movements for happiness, sadness, and surprise.

This research introduces a “multimodal speech-neuroprosthetic approach that has substantial promise to restore full, embodied communication to people living with severe paralysis,” the researchers write in their paper.

They say a crucial next step is to create a wireless version that would not require the user to be physically connected to the BCI. “Giving [paralyzed] people the ability to freely control their own computers and phones with this technology would have profound effects on their independence and social interactions,” co–first author David Moses, PhD, UCSF adjunct professor in neurological surgery, said in a news release.

Support for this research was provided by the National Institutes of Health, the National Science Foundation, and philanthropy. Author disclosures are available with the original article.

Nature. Published online August 23, 2023. Abstract

For more Medscape Neurology news, join us on Facebook and Twitter