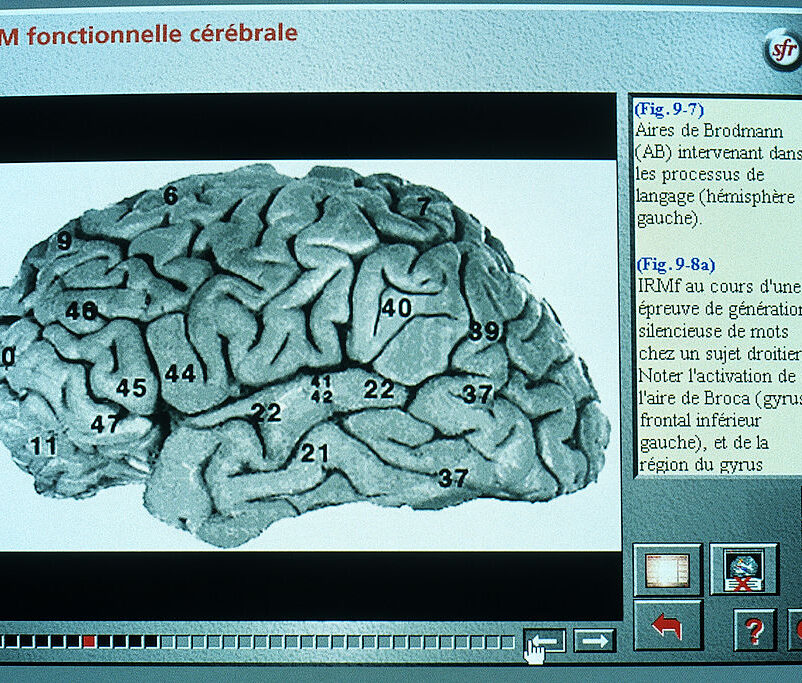

Minors can use NCMEC’s new “Take It Down” tool to flag explicit material of themselves that has been—or that they think will be—shared online.

Getty Images

Meta is funding a national effort to help kids and teens get their nude or sexually explicit images removed from social media. Could the giant’s own move toward end-to-end encryption get in the way?

The National Center for Missing & Exploited Children has launched a platform, funded by Meta, to help kids and teens have naked or sexual photos and videos of themselves removed from social media.

The new service for minors, called Take It Down, was unveiled one year after the release of a similar tool for adults known as StopNCII (short for non-consensual intimate imagery). Today, Take It Down will work only across five platforms that agreed to participate: Meta-owned Facebook and Instagram, as well as Yubo, OnlyFans and Pornhub.

“We created this system because many children are facing these desperate situations,” said NCMEC’s president and CEO Michelle DeLaune. “Our hope is that children become aware of this service, and they feel a sense of relief that tools exist to help take the images down.”

How social media companies are protecting children and teens—and where they’re falling short—has emerged as a top bipartisan tech policy issue in the new Congress, and one where lawmakers stand the best chance at passing legislation. Still, there’s a long road ahead for any proposals to create or strengthen safeguards, and with no laws imminent, NCMEC continues to act as a bridge between online companies and law enforcement on child safety issues. In 2021, the nonprofit received nearly 30 million tips from across the industry about apparent sextortion and child sexual abuse material on their platforms. A majority were reported by platforms owned by Meta.

Minors and parents can use NCMEC’s free, anonymous tool to flag explicit material of an underage person that has been—or that they think will be—spread online. Once they’ve selected the sensitive photos or videos on their device, the platform generates the equivalent of a digital fingerprint of that photo (called a “hash”) that NCMEC then shares with the participating social media sites so they can try to find, restrict or remove, and keep monitoring for that content.

The photos or videos in question notably do not leave the device and are not viewed by anyone, according to NCMEC. (The hash also keeps victims unidentifiable both to NCMEC and to the social media app, signaling only that an image is harmful and should be taken down.) NCMEC said there have been no known breaches of its hash database that could potentially expose an individual’s identity.

But one of the biggest obstacles for this effort is the rise of end-to-end encryption. “When tech companies implement end-to-end encryption, with no preventive measures built in to detect known child sexual abuse material, the impact on child safety is devastating,” DeLaune, NCMEC’s president, told the Senate Judiciary Committee at a hearing this month. The elephant in the room is that the financial backer and most prominent company involved in Take It Down, Meta, is moving at a clip toward end-to-end encryption on both Instagram and Messenger.

How the two organizations can reconcile this is “the million dollar question,” NCMEC vice president Gavin Portnoy told Forbes, noting that NCMEC still does not know exactly what Meta’s plans are for encryption and what safeguards may be in place. (Meta said they’re a work in progress and that various encryption features are being tested on Instagram and Messenger.)

“We’re building Take It Down for the way the internet and the [electronic service provider] is situated today,” Portnoy said. “That really is a bridge we’re going to have to cross when we have a better understanding of what [Meta’s] end-to-end encryption environment looks like—and if there is a world where Take It Down can work.”

Meta’s global head of safety, Antigone Davis, told Forbes that “encryption will result in certain images not being identified by us” but that it’s becoming an industry standard in messaging that is vital to user privacy and security. Using hash technology and supporting NCMEC on projects like this one are among several ways Meta is trying “to really get in front of the problem,” she said. (Both parties declined to say how much money Meta has given to NCMEC to build Take It Down.)

“There’s no panacea for addressing these issues,” Davis said in an interview, describing Meta’s approach as “multi-faceted.” In addition to collaborating on this new system aimed at thwarting sextortion and the non-consensual spread of intimate images, Meta does not allow adult strangers to connect with minors through its messaging features, she said. It’s also using classifiers to prevent minors’ accounts and content from being pushed to adult Meta users who’ve exhibited suspicious behavior online, she added.

“I hope that people who’ve had images shared like that when they were young by anybody—by a parent who was exploiting them, by another adult who was exploiting them, by an ex-boyfriend or girlfriend—that they will feel interested to use this tool,” Davis said.

NCMEC said it has pitched some 200 other electronic service providers that have submitted information to its “CyberTipline” on joining the Take It Down program. It remains to be seen who else—beyond the small handful of social media players championing it today—will get on board. Yubo, for its part, said it hopes these early adopters will push others to join.

“It takes more than just government intervention to mitigate risk and solve issues online,” Yubo CEO Sacha Lazimi said over email. “All actors need to play their part. … Collaboration is key to identifying solutions to big challenges, so we do hope to see more platforms join the Take It Down initiative.”